Era 01 — 1990s Early Internet

The One-Person Era

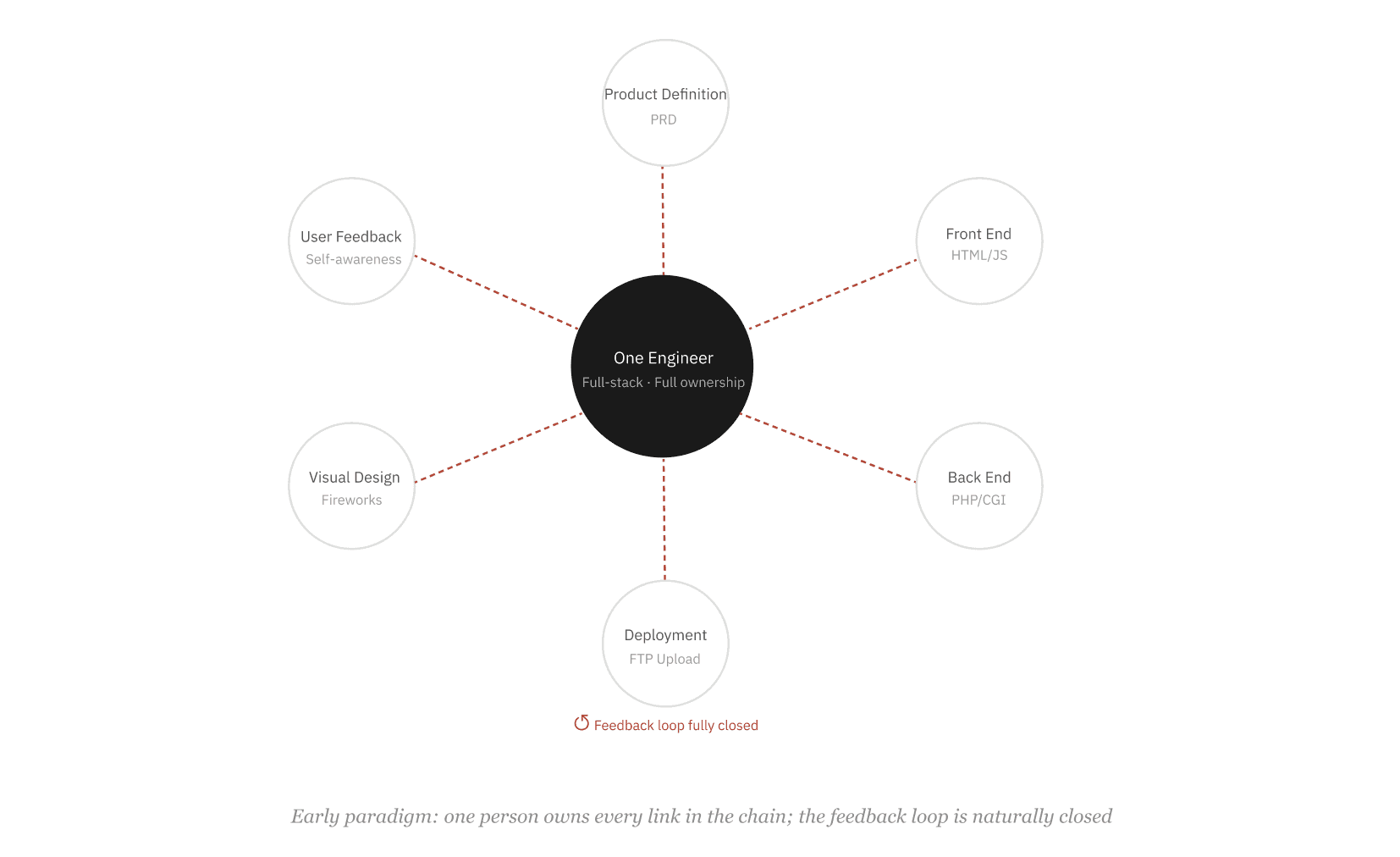

In the early days of the internet, a single person typically built an entire website. They defined what the product should do, wrote the front-end pages, coded the back-end logic, deployed it to a server, and fixed any issues themselves.

This was not because early engineers were exceptionally versatile, but because the problems were simple enough. A static page plus a few server-side scripts remained well within one person's cognitive capacity.

There was a toolset from that era called "the Web Trio" -- Dreamweaver for building pages, Flash for animation and interaction, Fireworks for image editing. The significance of this toolset was that it packaged all the complexity of Web development into a single product suite, enabling one person to take a website from visual design to launch without specializing in any single discipline.

This state came with an important byproduct: the feedback loop was closed. I wrote the code, I managed the server, I was the first to know when users had problems, and I was the one who fixed them. Like a cook who tastes his own dish before serving.

Era 02 — 2000s Specialization

Complexity Breaks Through, Division Emerges

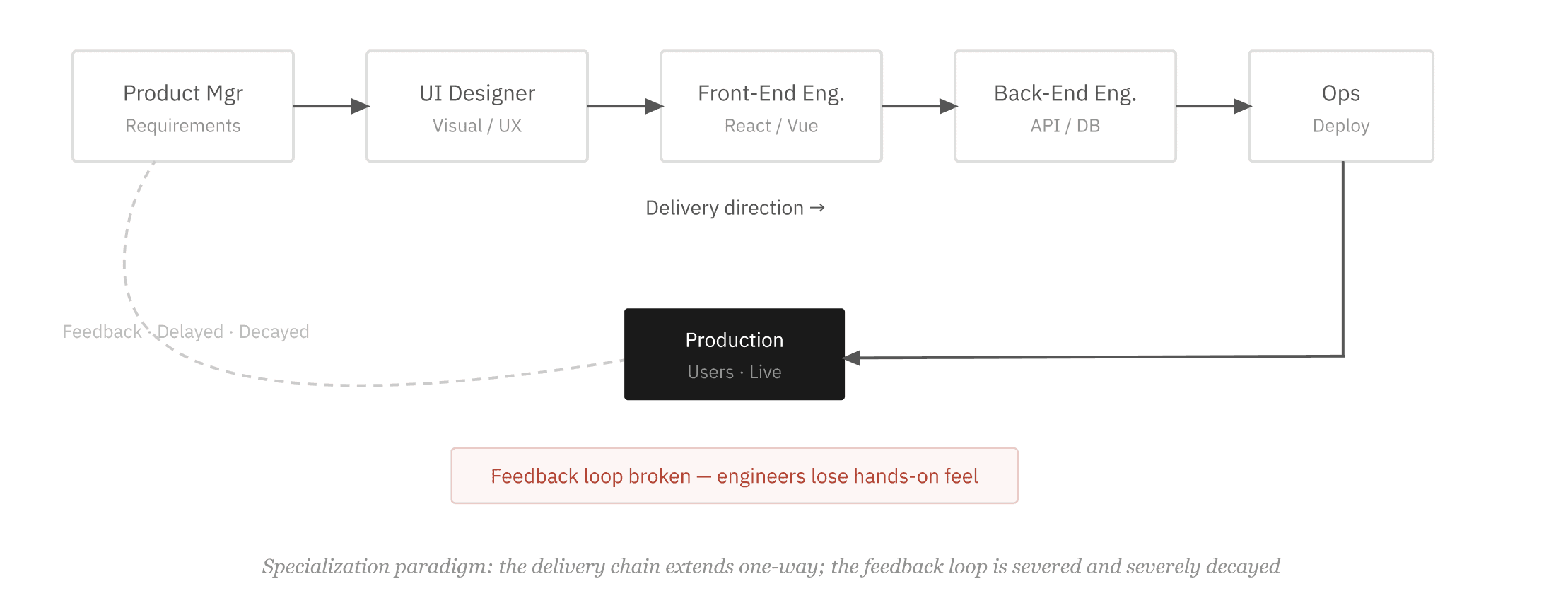

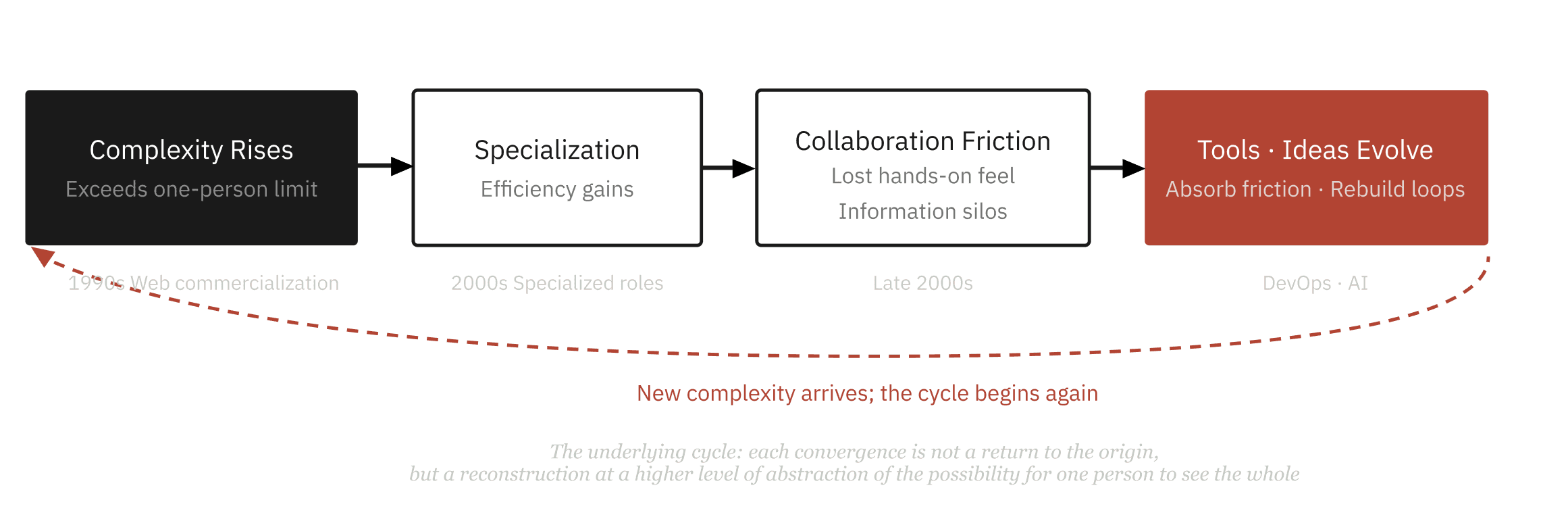

By the mid-1990s, the Web began to commercialize and the scale of problems changed. E-commerce and portal sites emerged, and systems needed to handle real concurrency, real data, and real security threats. One person's cognitive ceiling was exceeded, and division of labor became necessary.

The Web Trio disappeared along with this shift -- not replaced by better tools of the same kind, but because the "one person does everything" work style it served was no longer viable. In its place came deeper, more specialized toolchains for each discipline: Webpack and React for the front end, Figma for design, bespoke frameworks and middleware for the back end. Tools became specialized, and so did people.

This specialization raised the ceiling of what systems could achieve, making larger-scale software possible. But it also severed something.

Many engineers spend their entire career at a company without ever touching a production server. What happens after the code is committed is a black box to them. The cook never tastes his own dishes.

Era 03 — 2009 DevOps Movement

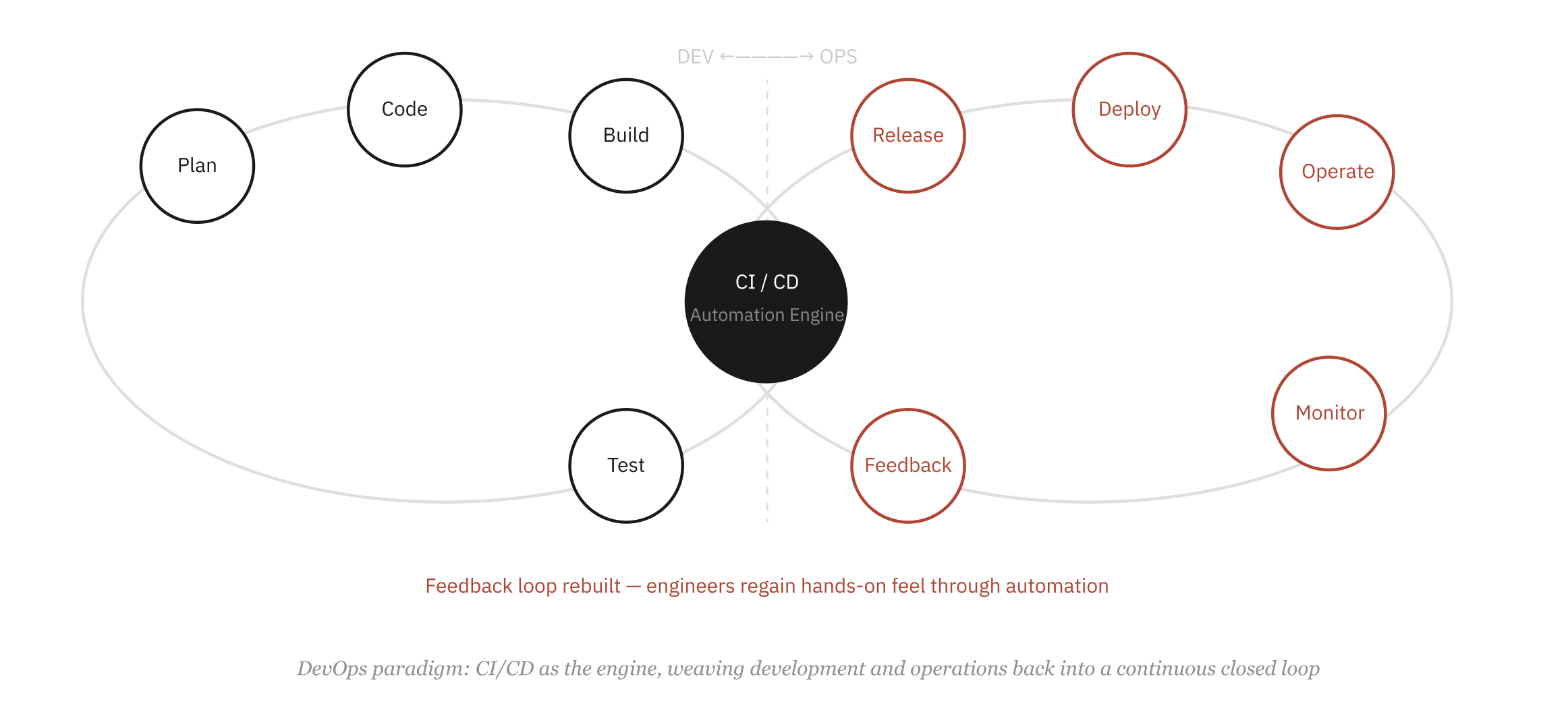

DevOps: Rebuilding the Hands-on Feel

Around 2009, the DevOps philosophy began to take shape. Its technical components are well known: continuous integration, continuous deployment, infrastructure as code, automated monitoring and alerting. But these are all means to a deeper end -- to rebuild the feedback loop that had been severed. To let the people who write the code once again feel what that code does in a real environment, and once again take responsibility for its behavior in production.

Amazon has an internally famous phrase: "You build it, you run it." This is not a statement about technology. It is a statement about who should own the sense of responsibility and the hands-on feel.

The maturation of cloud services was the technical precondition for DevOps to succeed. Once AWS abstracted away infrastructure, the barrier to operations dropped dramatically. Developers no longer needed to be ops specialists to perceive the production environment through automation. The feedback loop became possible again.

Era 04 — 2020s AI Era

A New Division of Labor: The AI-Era Pipeline

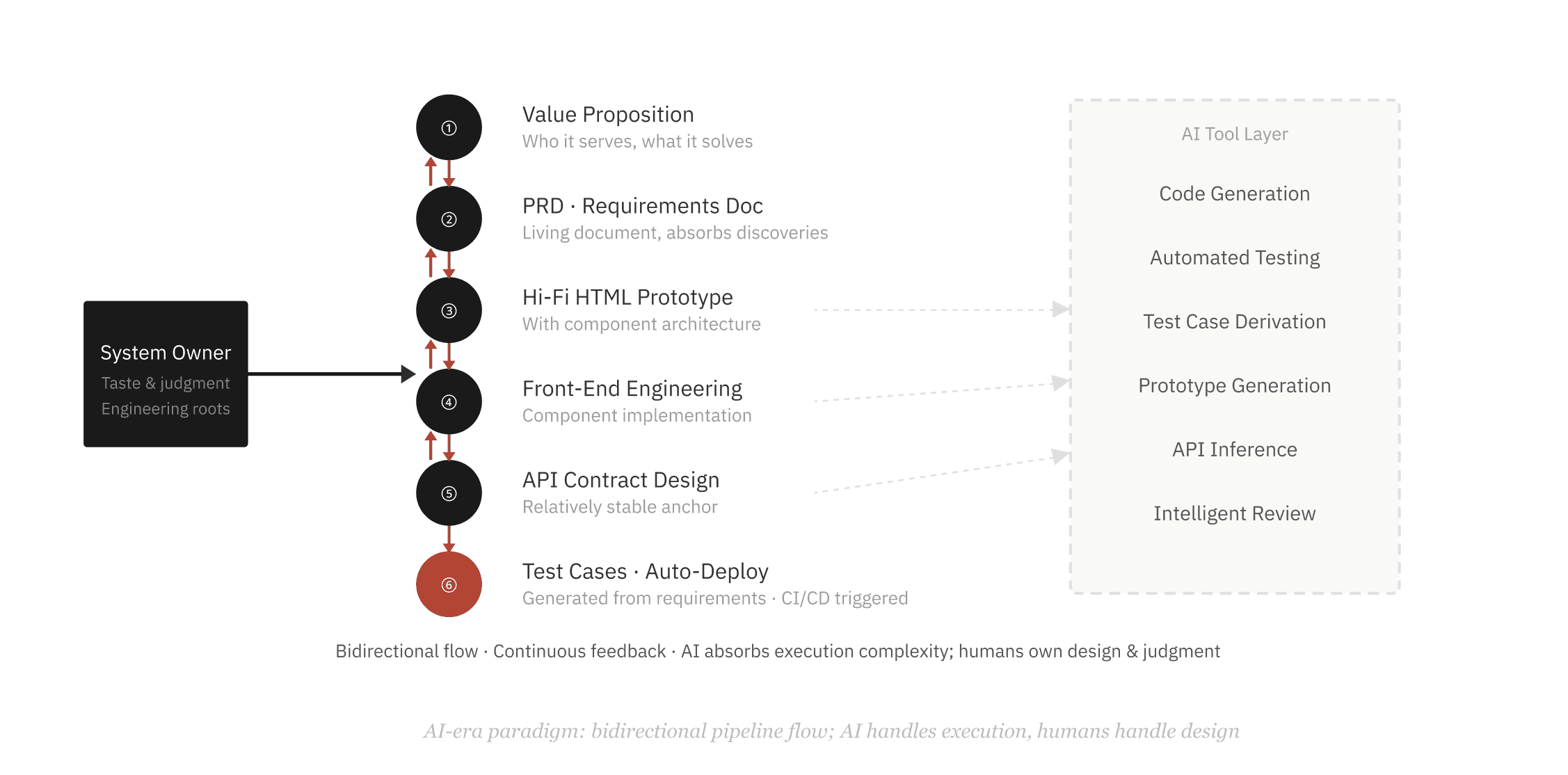

Today, AI-assisted programming is triggering a new wave of change. Code generation, automated testing, intelligent code review -- a large number of tasks that once required human effort are now being handled by tools. A solo developer can build products that would have required a five-person team a decade ago.

But this brings a new problem, deeper than what DevOps addressed. DevOps solved the problem of engineers not being able to perceive the production environment. The AI era faces a different question: when the entire production pipeline is highly automated, how do you ensure the pipeline itself is correctly designed?

Designing a pipeline and working on a pipeline are two entirely different things.

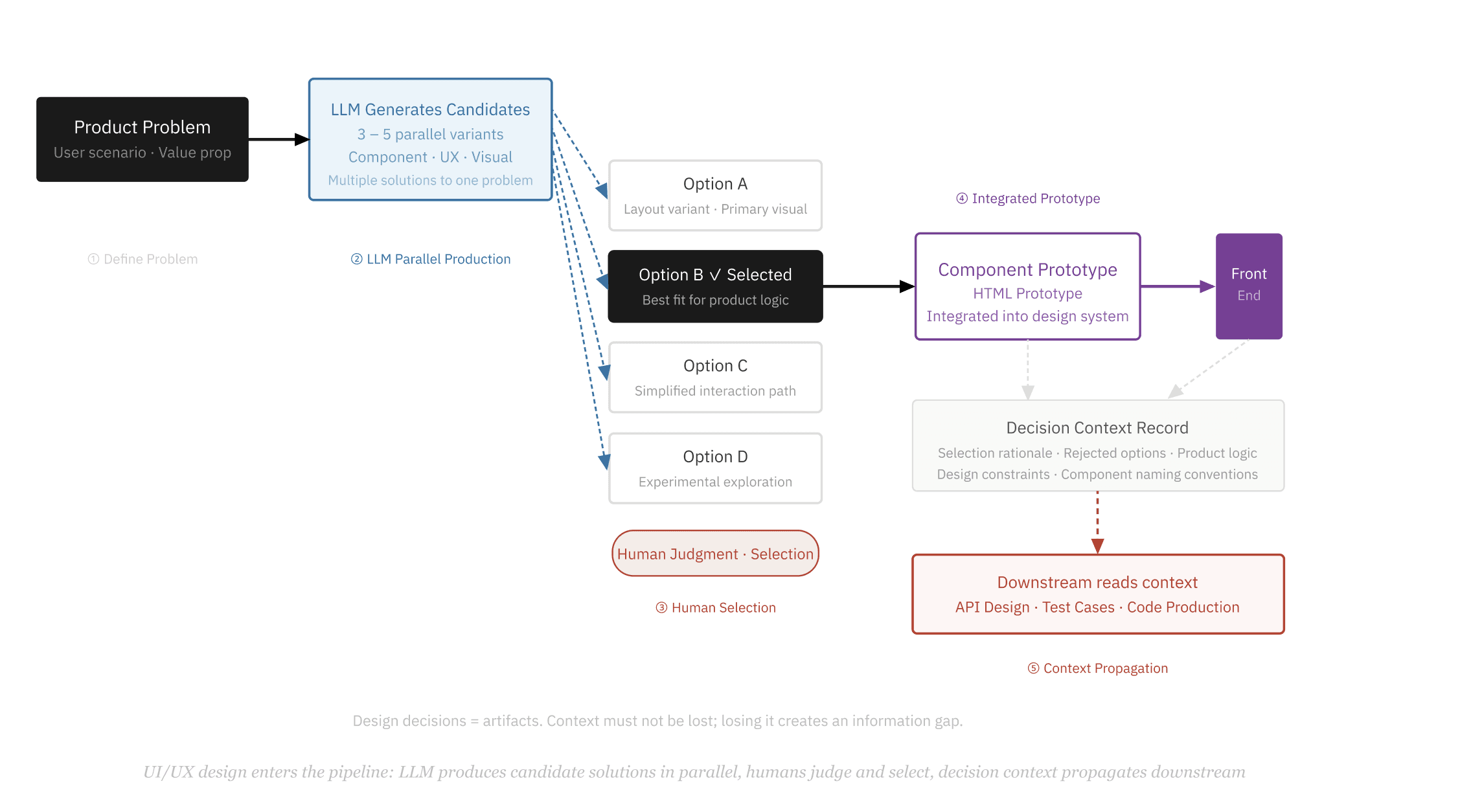

Product Experience · UI/UX — Design Enters the Pipeline

Design Is No Longer Outside the Pipeline

In the past, UI/UX design was an independent, heavily human-judgment-dependent process. Designers produced mockups, engineers attempted pixel-perfect implementation, and between them lay extensive back-and-forth communication and information loss. Design was a breakpoint in the pipeline, not a node.

Today, LLMs are changing the cost structure of this work. Design decision production can be dramatically accelerated: for a single problem, have the LLM generate three to five or more candidate solutions simultaneously. The human role shifts from "creating from scratch" to "judging and selecting from candidates." This is a fundamentally different mode of work -- the cost of judgment is far lower than the cost of creation.

Once the selection is made, a component-level prototype can be integrated directly into the overall product prototype, or even fed straight into front-end engineering. Design decisions no longer remain trapped in mockup files -- they become part of the codebase.

More importantly, all the context produced in this process -- why Option B was chosen over Option A, which interaction patterns were rejected, which visual judgments had product logic behind them -- these decision records are themselves artifacts. They are valuable input signals for downstream code production, test design, and the next iteration. Context must not be lost; losing it creates an information gap.

The ideal state of the design pipeline is not for AI to replace design judgment, but for human judgment to be focused where it is most valuable: selecting, from among multiple possibilities, the one closest to the product's truth.

This pattern has an important implication: the human role in the design process shifts from producer to editor. Judgment, taste, understanding of product direction -- these capabilities become more valuable than "knowing how to use design tools." And this circles back to the thesis about the system owner: only someone who truly understands the product's value proposition can pick the right option out of five candidates.

Currently, the cost of human intervention at this stage remains high, and there is significant room to optimize the process. But the direction is clear: as LLMs improve their understanding of product context, the quality of candidate solutions will rise, the number of nodes requiring human intervention will decrease, and the entire design pipeline will gradually converge toward automation.

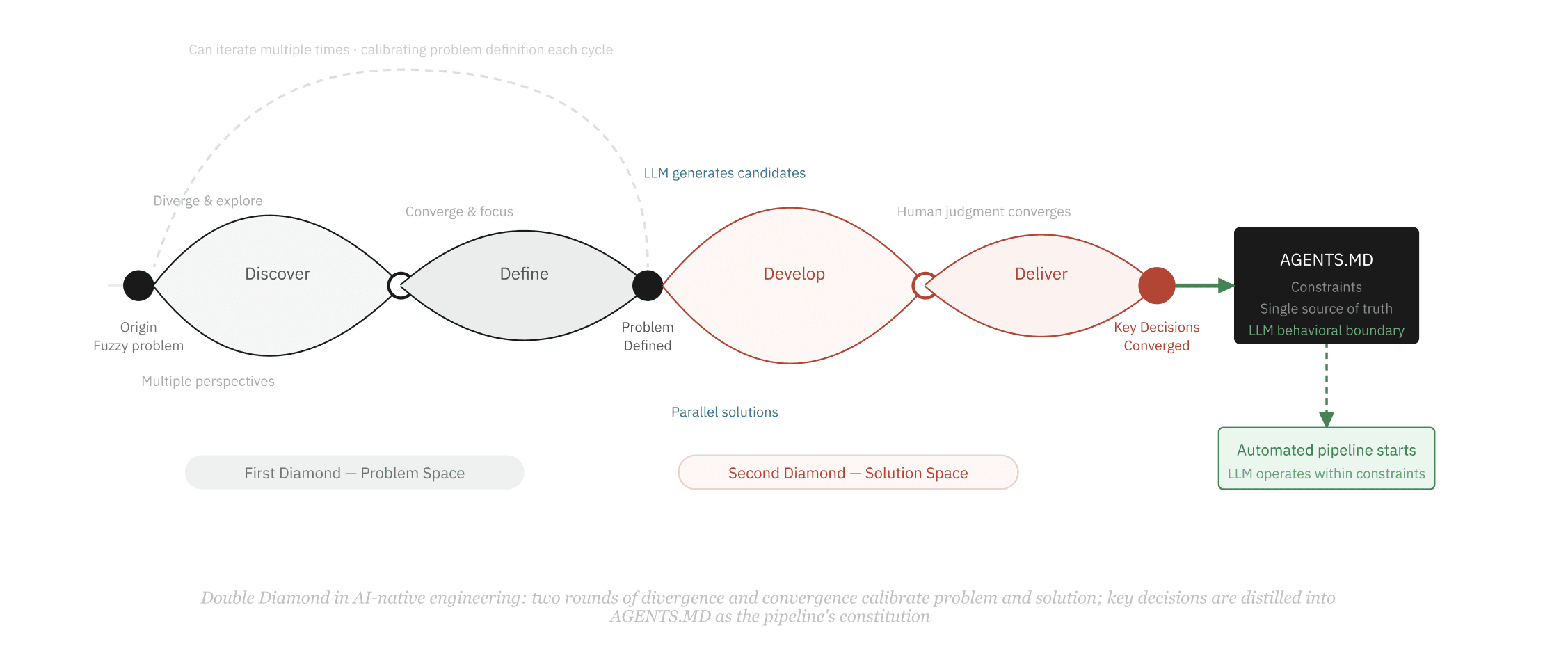

Double Diamond

Define the Problem from the Origin, Then Enter Automation

Before an automated pipeline runs at full speed, there is a more fundamental question to address: are you solving the truly right problem?

The Double Diamond model provides a structure for repeatedly calibrating the problem definition before entering production. It consists of two "diamonds," each a cycle of divergence followed by convergence. The first diamond confirms the problem itself -- diverge to explore all possible problem directions, then converge to a clear problem definition. The second diamond confirms the solution -- diverge across multiple candidate paths, then converge to an executable decision.

This process can cycle multiple times. Each cycle uses additional information to refine the understanding of the problem, until it converges on a set of truly validated key decisions.

These decisions are then written into AGENTS.MD -- the behavioral constraint file for LLM Agents in the engineering pipeline. It is not a requirements document but rather a constraint checklist and single source of truth: the LLM in all subsequent production stages operates within these boundaries, never exceeding them, never needing to re-infer them. The converged output of the Double Diamond becomes the constitution of the entire pipeline.

AGENTS.MD is the precipitate of the Double Diamond process. It translates the results of human judgment into machine-executable constraints, so automation no longer runs in the dark.

What Goes Into AGENTS.MD—The output of Double Diamond convergence -- the LLM's behavioral constitution

CONSTRAINTS What must not be done · Where the boundaries are | SINGLE SOURCE OF TRUTH Precise statement of the product value proposition |

AGENTS.MD is not a requirements doc, not a design mockup, not a technical spec — it is the distilled key decisions from all of the above, ready for direct LLM consumption | |

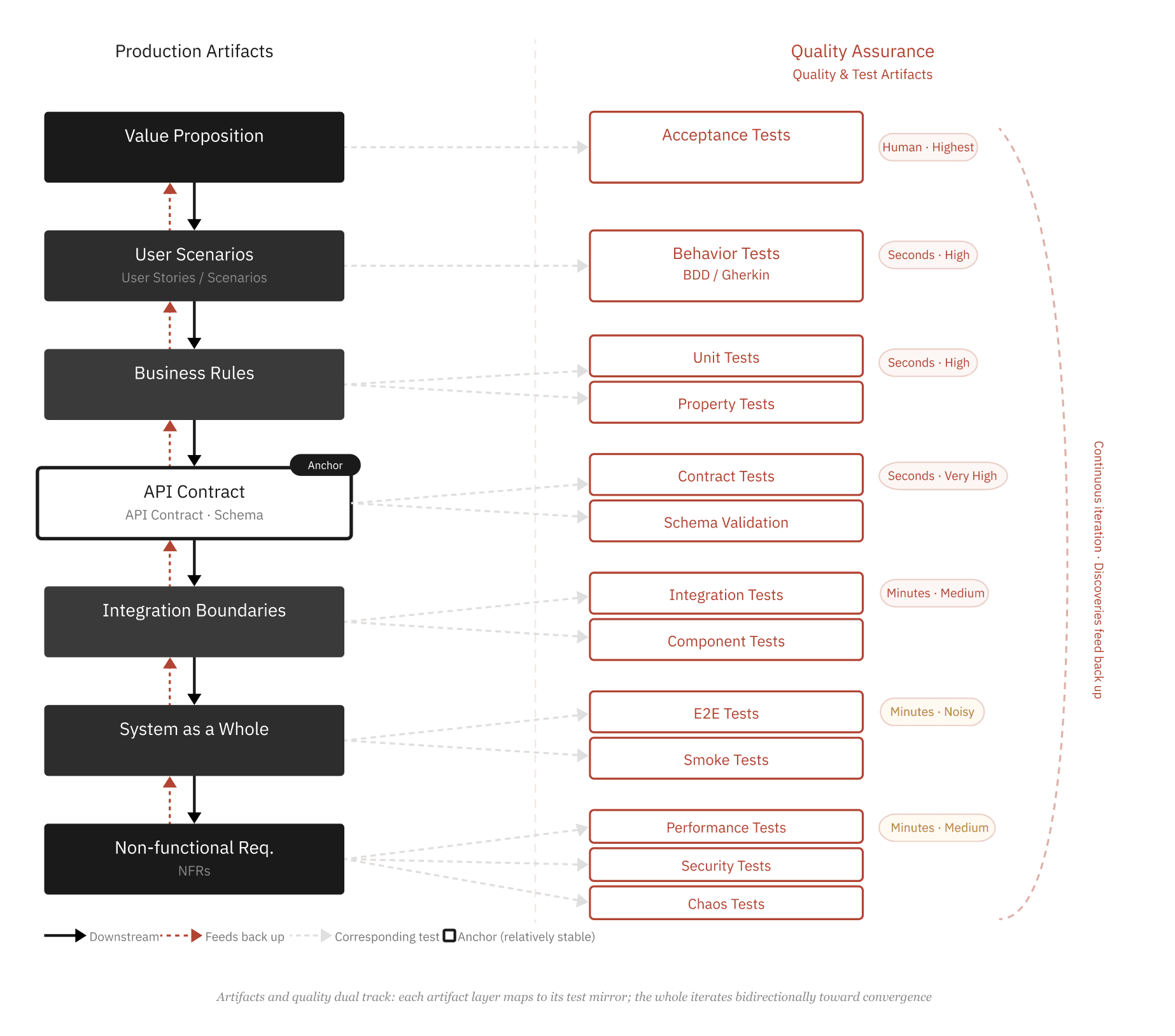

Artifacts & Quality — The Dual-Track Production Structure

Every Artifact Has a Corresponding Quality Check

In the AI-era engineering pipeline, production artifacts progress layer by layer -- from the top-level value proposition, all the way down through user scenarios, business rules, domain models, API contracts, integration boundaries, to the system as a whole and non-functional requirements. This process is not a one-way waterfall but bidirectional: discoveries at any layer can feed back up.

At the same time, each layer of artifact naturally corresponds to a type of test. This is not an afterthought quality check but a mirror of the artifact itself -- whatever the artifact defines, the test verifies. The whole structure forms a continuous, iterative closed loop.

Feedback Characteristics of Each Test Type —Ordered by feedback speed

Test Type | Feedback Speed | Signal Precision | Auto-Loop? |

|---|---|---|---|

Type Checking | Instant | Very High | ✅ |

Unit Tests | Seconds | High | ✅ |

Property Tests | Seconds | High | ✅ |

Contract Tests | Seconds | Very High | ✅ |

Integration Tests | Minutes | Medium | ✅ |

E2E Tests | Minutes | Low (noisy) | ⚠️ |

Performance Tests | Minutes | Medium | ⚠️ |

Acceptance Tests | Human | Highest | ❌ |

Chaos Tests | Uncertain | Low | ⚠️ |

Tests closer to the top have higher precision but depend more on human judgment; tests closer to the bottom are more automatable but noisier | |||

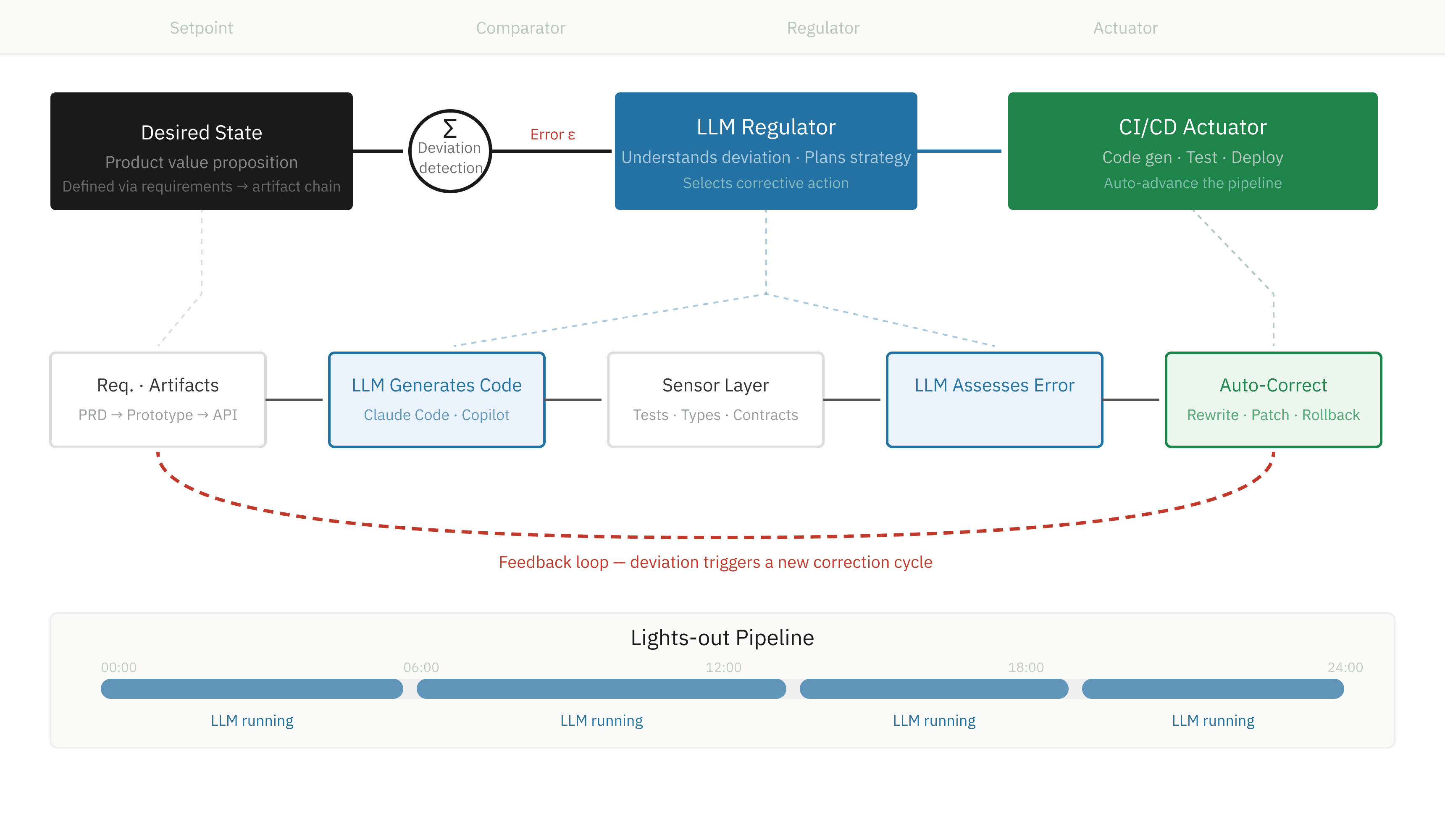

Cybernetics · Harness Engineering

Why Cybernetics Is the Discipline You Must Master Today

Cybernetics, proposed by Norbert Wiener in 1948, studies how systems maintain a target state through feedback. Its core model is elegantly simple: set a reference value, use a sensor to perceive the actual state, compare the two to detect deviation, have an actuator execute corrective action, and repeat.

This theory matters today not because it is new, but because LLMs are the first technology to make the "actuator" intelligent enough -- it no longer executes only fixed rules but can understand context, assess the nature of deviations, and choose corrective strategies. A decision node that once required human intervention can now be handled autonomously by the LLM.

This is the essence of Harness Engineering: designing the entire engineering pipeline from requirements to deployment as a closed-loop control system. Tests are the sensors, the LLM is the regulator, CI/CD is the actuator, and the product value proposition is the setpoint. Any deviation -- a failed test, a type error, a contract mismatch -- does not need to wait for a human to discover it; the system perceives, corrects, and advances on its own.

The ultimate form of this pipeline is lights-out operation: the LLM works continuously for hours or longer, completing full cycles from code generation to test verification to automatic repair in an unattended state. While you sleep, the pipeline keeps running.

Without understanding cybernetics, all you can design is a semi-automated process that requires constant human oversight. With cybernetics, you can design a system that corrects itself.

Cybernetics Concepts Mapped to the Engineering Pipeline

Cybernetics Concept | In Traditional Engineering | In the AI-Native Pipeline |

|---|---|---|

Setpoint (Reference) | PRD document | Value proposition → Artifact chain → Acceptance criteria |

Sensor | Manual QA · Code review | Automated test layer (types / contracts / E2E) |

Comparator | Meetings · Reviews · Human judgment | Test report + LLM deviation interpretation |

Regulator | Engineer modifies manually | LLM (understands context · selects corrective strategy) |

Actuator | Manual deploy · Manual commit | CI/CD · Auto-commit · Auto-rollback |

Feedback Lag | Hours · Days · Weeks | Seconds → Minutes (depends on test layer) |

Feedback lag is the critical variable for system stability — the shorter the lag, the better the system self-corrects; the longer the lag, the more deviations accumulate into crises | ||

Underlying Logic

A Cycle, but Not a Return to the Origin

The early "one person does it all" worked because problems were simple enough. The DevOps-era convergence rebuilt developers' awareness of the production environment through automation. The AI-era convergence is driven by tools powerful enough to let one person once again oversee and control a larger system.

The form looks similar, but the mechanisms are entirely different.

Only one thing truly never changes: at the arrival of each new level of complexity, someone must be able to see both the big picture and the details simultaneously, and design the next pipeline. That person is scarce in every era.

Deep Structure

Be a Chef Before You Design the Kitchen

McDonald's kitchen is a masterpiece of industrialization — every workflow, every workstation position is precisely engineered so that any employee with minimal training can consistently produce standardized output.

But the person who designed that kitchen had to first be someone who truly knew how to make a hamburger. If you have never made one, you do not know which step is most prone to error, where quality checkpoints should be placed, or how the order of tomato and lettuce affects the result. The kitchen you design will break in places you cannot foresee.

The same applies to software engineering today. Engineers in the AI era need two capabilities: a cybernetics-informed view of the whole system, and genuine hands-on feel for every production stage. Without the former, you are just a skilled worker who cannot optimize the system; without the latter, the pipeline you design is a castle in the air.

The early stages of software engineering are a process of knowledge production, not knowledge execution. A PRD cannot be frozen at the outset. The interaction design process will uncover new business logic that needs decisions, and those discoveries must feed back into the PRD. The engineering process will reveal permission issues that no one considered before, requiring feedback to the prototype design and even earlier stages.

The key is not which document is the single source of truth, but rather: at any given point in time, everyone on the team clearly knows where the current answer to a given question lives, and how stable that answer is right now.

Closing Note

This Is Not Idealism — It Is the Minimum Requirement

Software engineering has traveled from "one person does everything" to deep specialization, and then through DevOps to rebuild the hands-on feel. Today, AI is once again expanding the boundaries of what a single person can control. But this does not make things easier — it raises the bar. Because when tools become powerful enough, the bottleneck shifts from "can you do it" to "can you design it."

I believe any team that takes its product seriously needs one person who spans the entire picture, simultaneously ensuring four things: the quality of the output, the rigor of the production process, the definition of requirements, and the design of the entire automation pipeline. These four responsibilities cannot be split among four people, because the information flows between them are bidirectional and high-frequency — split them apart and the connections will break in places you cannot see.

This person needs to have both taste and deep, hard-won engineering experience. They must first be someone who truly knows how to make a hamburger before they can earn the right to design the kitchen's workflow.

Yet we must acknowledge a reality: the scarcity of such people is not accidental — it is structural. The entire industry's job ladders, hiring criteria, and compensation bands are designed around specialized depth. "Senior Frontend Engineer" has a clear career path and market price; "person who can see the whole system" has no corresponding category on the job market. More fundamentally, taste is cultivated through closed feedback loops, and specialization is precisely what severs those loops — in a highly specialized organization, people rarely see the downstream consequences of their own decisions. The cook never tastes his own dishes, so he never becomes a good cook. Specialization doesn't just rob products of hands-on feel — it robs people of the chance to develop taste.

Moreover, stable periods don't need this kind of person, so they don't cultivate them. Once a pipeline is running smoothly, what organizations need most are reliable operators at each station. The demand for "whole-system designers" only surfaces during paradigm shifts — like now. But the preceding stable period was precisely when specialization was at its finest and industrialization at its most thorough, systematically failing to develop these people. The moment demand appears is exactly when supply is most depleted.

When the ideal candidate is not available, teams must get as close to this condition as possible. Other roles can participate in the production pipeline design in supporting capacities, but at the core there must be one person who leads. The reason is concrete: before an engineering pipeline is truly stable, a codebase may undergo multiple destructive rewrites within a single month. Throughout this process, all context must live in one person's head. Otherwise, every update creates new breakpoints in the handoff gaps.

This is not an idealistic demand. It is the minimum condition for getting an engineering effort to actually work in today's technology landscape.

By Luyu Zhang · CEO, LangGenius, Inc. · April 2026